Judgment Isn’t Talent. It’s Practice.

The friction that built product judgment is disappearing. Four practices to keep it deliberately.

AI eliminated the reps that used to build judgment as a side effect. If you want to develop judgment now, you have to seek it deliberately.

The traditional ladder (write specs, ship V1s, debug production) built judgment accidentally. That ladder got pulled up.

Not all friction is bad. AI removes bureaucratic friction. The friction that built judgment was reality friction. Keep it.

The people treating AI as a productivity tool are getting faster. The people treating judgment as a practice are getting more valuable. The gap is widening.

You Took the Diagnostic

In the last post, I asked you two questions. What would you have contributed if AI had produced every artifact for your last feature in two hours? And when did you last sit with a real user?

If you loved your answers, this post isn’t for you.

If you didn’t, keep reading. Nearly every high performer I’ve talked to since that post is feeling some version of the same thing. Strong track records, promoted fast, trusted with hard problems. The version I keep hearing: “I feel like my strengths aren’t strengths anymore.”

These aren’t people who lack skill. They’re people whose skills were validated by an artifact-production model that is now compressing. The fear isn’t incompetence. It’s relevance.

Your strengths aren’t obsolete. The system that rewarded them changed. What follows is how you rebuild on different ground.

Three Readers, Three Problems

Not everyone reading this is in the same position.

If You’re Senior or Executive-Level

You probably have more judgment than you realize. Thousands of decisions, hundreds of customer interactions, dozens of product failures. Your problem isn’t a judgment deficit. It’s that you’ve never explicitly named what you do as judgment.

Stop apologizing for the fact that you think for a living. The shift is naming your judgment, leaning into it deliberately, and dropping the reflex to prove value through artifact production.

A distinction worth being honest about. Twenty years of varied decisions across different contexts, with real feedback loops, builds genuine depth.

Twenty years of the same call in the same domain builds pattern-matching that works until the frame breaks. AI is breaking the frame. If your experience has been narrow, the mid-career section below may be a better fit.

For those with genuine depth: your next move is naming what you already do. What are the three things you always push back on in reviews? What patterns make you uneasy before you can articulate why? Name them. The “Naming Your Judgment” section later in this post will show you how.

If You’re Early in Your Career

Here’s the good news first. For the first time, a PM can build a working prototype that demonstrates their judgment in action. You don’t need permission, a team, or a quarter of engineering time. You can show your thinking in a working product, not a slide deck.

But the portfolio isn’t the prototype. It’s the judgment trail behind it. Why this problem. Why this solution. What was killed along the way.

Now the honest part. The traditional path that built judgment for the people above you is disappearing. Junior PMs used to write dozens of specs before developing intuition about what makes a good one. Junior engineers used to debug production incidents until they could smell architectural risk. Junior designers used to iterate through dozens of mocks before developing taste.

Those reps are compressing or vanishing. AI writes the first draft. AI suggests the architecture. AI generates the variations. The reps that built judgment as a side effect of doing the work are being removed from the work itself.

You don’t build muscles by watching workout videos. You build them by doing the reps. If you have a job, seek out the hardest judgment calls on your team and volunteer for them. If you don’t, pick a real problem and build a real solution. Not a case study. A working product.

If You’re Mid-Career

You have some judgment and some artifact skill. Your daily work is a mix of both. The shift isn’t dramatic. It’s a gradual reallocation: less time polishing documents, more time in the activities that sharpen judgment.

The danger is that this reallocation feels unproductive. You’ll feel like you’re doing less. You are doing less, of the thing that’s compressing in value. The question is whether you’re filling that time with the thing that’s appreciating.

Here’s a filter. Writing a strategy doc is one task, but the 10x impact lives in the thesis and the bet, not in the formatting or the appendix.

The 10x question: For any piece of your work, ask: if I were 10x better at this specific part, would it produce 10x the outcome? Where the ceiling is low, automate ruthlessly. Where it’s high, protect and invest.

The Friction You Actually Need

Most people celebrate AI’s speed without noticing what the speed removed.

Bureaucratic friction (formatting requirements, status update meetings, elaborate quarterly planning ceremonies, manual reporting, copy-pasting between tools) doesn’t improve the quality of anyone’s thinking. AI is excellent at removing it, and good riddance.

Reality friction improves the quality of your thinking. It’s the resistance you encounter when your beliefs meet evidence. A customer who contradicts your thesis. An assumption you test and find wrong. Data that challenges what you believed.

This friction doesn’t slow work for the sake of slowing it. It slows work at exactly the moments where speed would substitute polish for understanding.

When AI removes all friction from your workflow, it removes both kinds. A PM who iterates a product doc through AI feedback ten times in an afternoon will produce a polished document. But if none of those iterations involved a customer, an uncomfortable assumption, or evidence that challenged the thesis, the friction removed was the kind they needed most.

The new reps are deliberate reality friction. Practices you choose to keep in a world that’s making everything frictionless.

The New Reps

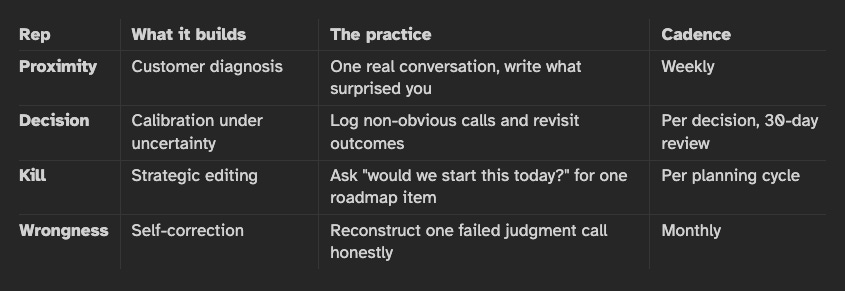

These aren’t productivity hacks. They’re the equivalent of a doctor’s clinical rotations. Four practices that build judgment when the old path through artifact production is gone.

Proximity Reps

The PM who knows their customer deeply generates prototypes grounded in reality. The PM who builds based on how they feel about the problem generates prototypes grounded in projection. AI makes this gap catastrophically wider. The disconnected PM goes from assumption to polished-looking-but-wrong prototype in two hours. Polish masquerades as insight.

The practice: one customer conversation per week. Not a survey. Not a dashboard. A conversation where you can ask follow-up questions and notice what they don’t say. Watching users use your product, not reading summaries about how they use it.

After each one, write one sentence about what surprised you. If nothing surprised you, your sample isn’t varied enough.

When we were building an enterprise API platform, I assumed the decision was which API gateway to license. The safe path was obvious: pick the vendor backed by the Gartner quadrant and move on. Nobody would have questioned it.

Then I talked to the developers who would actually use it and the business stakeholders who would sell through it. The surprise: nobody cared about the gateway. Gateways are commodities. What developers wanted was the experience around the gateway: discovery, exploration, try-before-you-buy, quick-start guides, monetization hooks, support. We built an end-to-end platform shaped by those conversations.

I walked in with a vendor selection question. I walked out building a different product. That reframe didn’t come from a dashboard or a competitive analysis. It came from sitting across from the people who would use the thing.

Decision Reps

Judgment develops through making calls with incomplete information and tracking whether they were right.

AI makes it easier to avoid this. You can always generate one more analysis, one more scenario, one more option set. The tool becomes the avoidance mechanism. But the decision itself is the rep. Not the safe decision that everyone agrees with. The judgment call where reasonable people would disagree, where you’re taking a position based on your read of the situation.

The practice: when you make a non-obvious call, write down what you believe and why before the outcome is known.

Revisit in 30 days. The goal isn’t a batting average. It’s calibration: learning which signals you overweight and which you miss.

Kill Reps

The most undervalued judgment skill is knowing when to stop.

Before AI, killing a project was painful because building was expensive. You’d invested a quarter of engineering time. Sunk cost made killing feel wasteful. Now building is cheap, which should make killing easier. But it doesn’t. You can build a prototype in an afternoon, and the prototype looks good, so the emotional case for continuing gets stronger even when the strategic case doesn’t.

The practice: pick one thing on the roadmap and ask: “If we had never started this, would we start it today?” Not “does the prototype work” but “would customers choose this over doing nothing?” If the answer is no, ask why it’s still alive.

Early in the cloud migration wave, we partnered with a middleware vendor who pitched a compelling story to our executives: a seamless bridge from legacy to cloud without rearchitecting. The thesis made sense on paper. We started the work on one of our largest, most complex product areas.

A few months in, the signal was clear. The “seamless bridge” was adding a layer of abstraction that would age poorly. The cloud-native path was harder, but it was where the industry was going. Every month we spent on the intermediary was a month we weren’t building the real thing.

We killed the partnership and redirected the resources to go cloud-native. The decision wasn’t popular. The original pitch still looked good in slide decks. But the thesis underneath it had expired. The hardest part of killing it wasn’t the sunk cost. It was that the original logic was still defensible. It just wasn’t current.

Wrongness Reps

This is the uncomfortable one. Deliberately reviewing where your judgment failed.

Not a retro designed to make everyone feel okay. A private, honest examination of the calls you got wrong. The feature you championed that customers ignored. The assumption you carried too long. The competitor signal you dismissed.

The practice: monthly, pick one judgment call that didn’t land. Reconstruct your reasoning at the time. Not what you couldn’t have known, but what was available and you didn’t weight correctly. This is the rep that separates people who have ten years of judgment from people who have one year of judgment repeated ten times.

A note on AI scaffolds. Review tools, rubrics, and frameworks can accelerate the early stages of all four reps. Use them when you’re learning the territory. But recognize when the scaffold is doing the cognitive work for you.

A PM who prepares for a hard review by asking “what will my leader challenge?” is practicing the skill of finding their own blind spots. An AI pre-review that answers that question in advance shifts preparation from “find my blind spots” to “fill in the blanks.” The output looks identical. The learning is categorically different. The scaffold should come down before it becomes load-bearing.

Naming Your Judgment

If you’re experienced, the four reps above will sharpen skills you already have. But there’s a practice specific to your level: making your judgment explicit.

Most experienced leaders have never named their judgment model. They review a product doc and have a reaction. They push back in a meeting. They redirect a team. But ask them “what are your three recurring questions when you evaluate a product decision?” and they struggle to articulate it.

What they think is intuition is actually a set of structured heuristics they’ve never made explicit.

Here’s what the exercise looks like. A product leader sits down to answer one question: “What do I always push back on in reviews?” They start listing:

Has the PM talked to customers directly?

Is the hypothesis named and falsifiable?

Is the pre-mortem honest or performative?

Is opportunity cost acknowledged?

What’s the strongest case against this?

They end up with eleven dimensions. Not invented from theory. Extracted from years of their own review conversations.

Then they go further. They add gates: before the review even starts, has the PM talked to customers directly? Were they surprised by anything they heard? If not, the review doesn’t proceed. Not because the rubric says so, but because the leader knows from experience that a doc built without customer contact isn’t worth reviewing at the detail level.

This is the portable layer of judgment: your repeatable questions, standard frameworks, and known failure modes. The stuff you can name and encode.

The live layer is different: recognizing when your own framework doesn’t apply. You can only develop it through direct exposure (that’s what proximity reps and wrongness reps are for). But the better you articulate the portable layer, the more bandwidth you free for the live layer. You stop spending energy on the patterned decisions and start noticing where the patterns break.

Stepping Back

The AI discourse is dominated by tools, techniques, and capabilities. Learn this framework. Master this prompt pattern. Use this tool. Technical proficiency is a real advantage, and it’s foolish to ignore it.

But the deeper game isn’t tools. It’s the quality of thinking you bring to what the tools produce. The tools will keep getting better. The quality of thinking will only improve through the specific practices in this post.

The irony is that the people most drawn to AI productivity tools are often the people who most need to slow down and do the judgment reps. The tools feel productive. The reps feel slow.

Tools without reps produce polished artifacts built on weak foundations. Reps without tools produce strong judgment that AI can then amplify 100x.

The order matters.

Treat judgment development as continuous. Not something you did on the way up and left behind. A permanent practice, like a surgeon who continues clinical rounds regardless of seniority.

In Practice

Pick the rep that maps to your biggest gap:

If you can’t remember your last customer conversation: Proximity reps. Start Monday.

If you make safe decisions that everyone agrees with: Decision reps. Start logging the calls that scare you.

If you keep features alive too long: Kill reps. Ask “should this exist?” before “is this good?”

If you haven’t examined a recent failure honestly: Wrongness reps. Pick one. Reconstruct.

If you can’t articulate what you review for: Name three of your recurring questions. That’s where the “Naming Your Judgment” work begins.

Not all five. One. The one where your answer is weakest. That’s where judgment grows.

Next in this series: how customer diagnosis and solution evaluation work in practice when AI is doing the building. That’s where the judgment reps meet the daily work.