AI Took the Artifacts. What's Left Is Judgment.

AI didn't take your job. It revealed what your job always was.

AI collapsed the production layer of knowledge work. The existential question isn’t whether AI will take your job. It’s whether you were ever providing judgment or just producing artifacts.

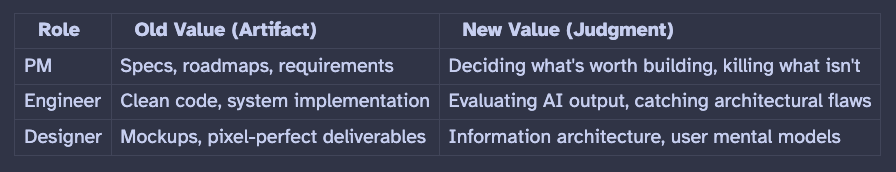

Specs, code, designs, prototypes: all producible in minutes now. If your value was the artifact, you have a problem.

Judgment (deciding what to build, evaluating whether it’s right, knowing when to kill it) is the durable skill. It applies across PM, engineering, and design.

Speed without judgment is dangerous. The faster you can build, the more judgment matters, not less.

The Question Everyone Is Asking

A few weeks ago, a senior executive I know well pulled me aside after an alumni reunion. Twenty years of experience. Strong track record. Led large teams through multiple product cycles. He asked me, quietly: “With everything AI can do now, do they still need someone like me?”

He’s not alone. I’ve heard versions of this from PMs, engineers, engineering managers, and designers. It shows up in three flavors. “I don’t know where to start with AI.” “I’m not technical enough to survive this.” “My entire role is at stake.”

Different words, same fear underneath. And it’s not just hallway anxiety. It’s a boardroom strategy.

The people building AI are saying it plainly. Anthropic’s CEO Dario Amodei wrote in January 2026 that AI will “disrupt 50% of entry-level white-collar jobs over 1 to 5 years” and called the coming disruption “unusually painful.” Mark Zuckerberg told investors that AI will do the work of mid-level engineers.

And the enterprise results are already here. Klarna’s AI agent handles the equivalent work of 850 human agents, and the company has seen a roughly 50% workforce reduction through attrition since 2022. Salesforce cut its customer support headcount from 9,000 to 5,000. Block cut 40% of its workforce, with Jack Dorsey explicitly attributing it to AI enabling smaller teams.

These are not pilots. These are not experiments. This is the new operating reality at companies with tens of thousands of employees. The question that the executive asked me is the question the entire industry is asking. The rest of this piece is my answer.

The Misdiagnosis

I know a PM who spent a weekend taking a prompt engineering course. An engineer who doubled down on LeetCode prep. Multiple designers who started learning to code. All smart, driven people. All responding to the same instinct: the threat feels technical, so the solution must be technical too. Learnable. Controllable.

But the threat isn’t technical. The threat is that your value was defined by the artifacts you produced.

For decades, specs, code, mockups, and test plans were the job. You produced them, stakeholders consumed them, and the quality of the artifact stood in as a proxy for the quality of your thinking. AI now produces those artifacts. Fast, cheap, and increasingly well.

If the artifact was your value, then yes, you have a problem. But the artifact was never the real value. It was a container for something else.

That something else is what this piece is about. Not the organizational shift (I’ve written about that). This one is about you. What’s left when the artifacts disappear?

What Judgment Actually Is

An AI tool generates a prototype in two hours. Two people review it. One says, “Looks great, let’s show stakeholders.” The other says, “This solves the wrong problem. The user doesn’t need a dashboard; they need an alert that fires before the problem happens.”

Same prototype. Same tool. Same speed. The difference is judgment: the ability to distinguish between correct and right.

Correct means it works as specified. Right means it solves a problem worth solving, for a person who actually has that problem, in a way that fits how they work.

What remains when production collapses is this distinction, applied dozens of times a day. Deciding what to build and, harder, what not to build. Evaluating whether the output is right for the problem. Knowing when to kill something that isn’t working, even when the team is excited about it.

Shaping iteration toward an outcome that matters, not just toward something that looks finished.

This isn’t one skill. It’s knowing your customer deeply enough to spot the wrong problem before anyone builds it. Understanding your business model well enough to kill a feature that users love but economics don’t support. Reading a stakeholder’s objection and knowing whether it’s political or substantive. The instinct that says “this will break at scale” before anyone runs a load test.

None of these are AI skills. They’re accumulated through proximity, reps, and paying attention. AI doesn’t replace any of them. It just makes them the only ones that matter.

The value is shifting from production to judgment, and from judgment to the speed of the judgment loop. How fast can you go from insight to prototype to validated learning? That loop speed is the new measure of effectiveness.

Klarna’s CEO, Sebastian Siemiatkowski, put it bluntly: during the first two years of their AI strategy, they focused on hiring engineers. Then it “switched, actually. It’s almost the opposite.” The business knowledge of non-coders, people who can use AI to build, but also know what dashboards or features are needed, has become more valuable.

The engineers? “They’re like, ‘okay, I coded this feature. What do I do next?” That’s the artifact-producer without judgment, described by a CEO who’s lived through the transition.

The Same Shift, Every Role

Siemiatkowski was describing engineers. But the pattern is identical across every knowledge work role. Wherever the artifact was the identity, the same reckoning is happening.

Developers who define their value as “I write clean code” are in the same position as PMs who define their value as “I write good specs.” The artifact is being commoditized.

The junior dev valued for cranking out CRUD endpoints? Fully automated now. The mid-level dev valued for React expertise and well-structured components? AI does that reliably. Even the senior dev who prides themselves on elegant architecture gets nervous when Claude Code scaffolds a whole system in minutes.

But here’s where it gets interesting. The developer who looks at AI-generated code and says, “This won’t survive 10x load,” or “this data model becomes a nightmare when we add multi-tenancy,” or “this is solving the wrong problem at the wrong layer.” That person is more valuable than before, not less.

AI produces plausible code at incredible speed, and the cost of bad judgment is now multiplied by the speed of production.

The PM who can go from customer insight to a validated prototype in a day. The developer who can evaluate, reshape, and stress-test AI output faster than the AI produces it. Both are operating as editors of AI output, not producers of artifacts.

The role is different. The judgment layer is the same.

The Uncomfortable Truth

Production was slow enough to hide weak judgment for decades.

When a spec took two weeks to write, nobody asked whether the PM had genuine insight or was just organizing inputs from stakeholder meetings. The slowness looked like rigor.

When code took a full sprint to ship, nobody asked whether the engineer understood the problem deeply or just implemented the ticket as written. The effort looked like a value.

When a design went through three rounds of review, nobody asked whether the designer was making real decisions or just iterating toward consensus. The process looked like a craft.

Think about how many sprint retrospectives you’ve sat through where the team debated velocity, story points, and estimation accuracy, but never once asked: “Was this the right thing to build?” The process machinery was so consuming that judgment never came up. The speed of the cycle was the measure of success, not the quality of the decisions that fed it.

AI compresses the timeline and makes the gap visible. The PM who never had a strong point of view now has nothing to hide behind. The engineer who never questioned requirements is exposed when AI can implement any requirement instantly. The designer who relied on iteration-toward-consensus discovers that AI converges on “acceptable” in minutes, and that was apparently all they were doing too.

The creamy layer, the top that rises to the surface, is the people who remain indispensable. Their judgment was always the real value. They also happened to produce the artifacts. AI just made this truth undeniable.

And the economics confirm it: Klarna’s workforce shrank by 50%, but revenue per employee went from $300,000 to $1.3 million. The people who stayed got more leverage and more compensation. As Siemiatkowski put it: “My employees know that they’re driving efficiencies, but they are also participating in getting the benefit of that.”

If AI writes the code, what is the developer? If AI writes the spec, what is the PM?

The answer is the same in both cases: you’re the person who knows what to build and whether it’s right. The ones who thrive are the ones who were always doing that. The ones who were primarily valued for the mechanical act of production are the ones in trouble.

The False Confidence Trap

Speed without judgment isn’t just useless. It’s actively dangerous.

Picture this: a PM uses AI to build a prototype in two hours. It looks production-ready. It has real interactions, plausible data, and smooth flows. The PM shows it in a stakeholder review.

Excitement builds. A team forms around it. A roadmap shifts. Resources redirect.

The prototype solved the wrong problem. But nobody questioned it because it looked so real. Polish masqueraded as insight.

The reasonable objection: “If I can build something in two days instead of two months, what’s wrong with getting it wrong? I’ll throw it away and start over.” For personal projects, this is genuinely true. The throwaway cost is trivial.

In an organization, nothing is truly throwaway. The artifact was cheap. The organizational momentum it created is expensive to reverse. Decisions crystallized around that prototype. A VP mentioned it in a board update.

The cost isn’t the code. It’s the conviction that builds around a convincing wrong thing.

And even individually, not all throwaway cycles are equal. The PM who builds and discards five prototypes grounded in deep customer knowledge is triangulating toward the right answer. The PM who builds and discards five prototypes grounded in vibes is just busy. One is iterating toward insight. The other is doing random walks.

Klarna learned this at the company scale. Their AI chatbot replaced the equivalent of 850 human agents. The efficiency metrics looked like a proof of concept. Then service quality declined, and the company had to rethink.

CEO Siemiatkowski now says they’re reversing course on full AI support: “We think it’s going to be like the future to offer human support. It’s going to be like the VIP treatment.” The initial metrics masked a judgment gap. Knowing which interactions need a human and which don’t is itself a judgment call, and they got it wrong by optimizing for speed alone.

What Fuels Judgment

If judgment is what remains, what fuels it? The answer is unglamorous: customer proximity.

Not quarterly research rituals. Not persona documents. Not reading a summary of someone else’s user interviews. Actual proximity.

Knowing your user’s workflow, their frustrations, their workarounds, the emotion they feel when something breaks.

The PM who knows their customer deeply generates prototypes grounded in reality. The PM who builds based on how they feel about the problem generates prototypes grounded in projection. AI makes this gap wider and the consequences steeper.

The informed PM goes from insight to validated prototype in a day. The disconnected PM goes from assumption to polished-but-wrong prototype in two hours. The speed of production amplifies whatever customer understanding you bring to it, strong or weak.

The existential fear (”AI will replace me”) asks the wrong question. What AI actually exposes is whether you were ever providing judgment value, or just organizing the process. Customer proximity is what separates the two.

One is iterating toward insight. The other is doing random walks.

The Existence Proof

My wife trades a specific swing trading strategy. Not day trading. Not position trading. Swing trading, with its own universe of techniques, edges, and traps. She has her own rules, her own stock universe, her own journaling discipline for every position she enters and exits.

There are dozens of commercial trading apps. Some do scanning well. Some handle journaling. None does all of it integrated into a single workflow that matches how she actually thinks about markets. So I built one for her, using AI as the execution layer. Real money is at stake, which means precision matters.

The application pulls real-time quotes, runs market scans, calculates position sizing and risk parameters, tracks opportunities through her specific pipeline, and journals every entry and exit. I’m not reviewing code line by line. The judgment that mattered was knowing her domain deeply enough to specify what “right” looked like.

A quarter of the way in, I hit a wall. I’d started with a rapid prototyping framework that worked for the initial build. But the app needed real-time streaming for live price tickers and direct front-end integration with multiple APIs. The framework’s architecture wasn’t built for that interaction pattern.

Could a more experienced developer have made it work? Maybe. But the point isn’t the technical choice. The point is that I recognized the mismatch because I understood the need, not because I understood the framework’s internals.

I redirected to a stack built for the interaction model the app required: proper streaming, direct API calls, and a front-end architecture that matched how a trader actually uses the tool. That decision was the needs driving the tech, not the other way around. Someone without domain knowledge wouldn’t have known the current behavior was wrong. The judgment to redirect early, a quarter of the way in instead of all the way through, came from understanding what the user actually required.

If I’d been wrong about her workflow, if I’d been building from assumptions instead of proximity, AI would have helped me build the wrong thing beautifully. Twice. The tool is neutral. Judgment is the variable.

Stepping Back: What It Means for You

Remember the executive from the beginning of this piece? Twenty years of experience, strong track record, asking quietly: “Do they still need someone like me?”

Here’s my honest answer to him, and to you: it depends on what “someone like you” actually means.

If it means the person who coordinated the team, organized the process, and delivered the artifacts on time, then the ground is shifting under your feet. That’s real. Pretending otherwise helps no one.

Roles will compress. The disruption is already happening, and it will accelerate.

But if “someone like you” means the person who knows the customer deeply enough to spot the wrong problem before it’s built, who has the judgment to redirect a team when the polished prototype is solving the wrong thing, who understands the domain well enough to know what “right” looks like before anyone writes a line of code, then you’re more valuable than you were a year ago. Not less.

The path forward isn’t “learn to code” or “learn to prompt.” Those are technical solutions to a judgment problem.

The Judgment Diagnostic

Here’s something concrete to do this week.

Pick the last feature or project you shipped. Ask yourself one question: if AI had produced every artifact (the spec, the code, the design, the test plan) in two hours, what would you have contributed that the AI couldn’t?

If your honest answer is “I coordinated the team” or “I wrote the requirements clearly” or “I implemented it cleanly,” that’s the artifact layer. That’s what’s compressing.

If your answer is “I knew this was the right problem because I’ve watched 30 customers struggle with it” or “I caught a flaw the team missed because I understood the second-order effects” or “I killed a direction that looked promising because the unit economics didn’t work,” that’s judgment. That’s the layer that becomes more valuable, not less.

Be honest about which answer is yours. Not which answer you aspire to. Which one is true today.

Then ask: when was the last time you were with a real user? Not reading a research summary. Not reviewing analytics. Actually watching someone use your product or describe their problem in their own words.

If you can’t remember, that’s your starting point. Not learning to prompt. Getting closer to customers. That’s where judgment comes from, and judgment is what remains.

If you did this exercise and didn’t love the answer, the next post is for you. Judgment isn’t fixed at birth. It can be cultivated deliberately. But the path isn’t what most people expect. More on that soon.

In case you missed it, my earlier post on the topic: