The Operating Model for When Building Is Free

AI is changing how we build software. Next, it changes how we organize around it.

The cost of building software is collapsing. Your operating model didn’t notice.

The separation of Product, Engineering, and Design was an artifact of expensive building. That constraint is gone.

The new competitive unit isn’t a cross-functional team. It’s a domain expert with judgment and AI leverage.

Moats have shifted from what you build to how deeply you understand who you build it for.

Three Builds, One Pattern

My wife trades a specific swing trading strategy. Her own rules. Her own universe of stocks. Her own journaling discipline for every position she enters and exits.

There are commercial trading apps. Dozens, in fact. Some do scanning well. Some handle journaling. Some are strong on position management.

None does all of it the way she needs it, integrated into a single workflow that matches how she actually thinks about markets. So she’s always stitching tools together, compromising on one dimension to get another.

So I built one for her. Not a toy. Real money is at stake, which means precision matters.

The application pulls realtime quotes, runs market scans, calculates position sizing and risk parameters, tracks opportunities through her specific pipeline, and journals every entry and exit. Under the hood, it integrates with multiple data providers and systems. When your P&L depends on the accuracy of what’s on screen, “close enough” isn’t an option.

I’m not an engineer. I used to be, a long time ago. That background helps at the margins: I know the terminology, and I have intuition for what an AI assistant means when it discusses system architecture.

But I’m not reviewing code or debugging implementations. I built this because I understood her domain, I knew what “right” looked like for her workflow, and the distance between “I want this” and “this exists” has collapsed to a conversation with an AI coding tool. The skill that mattered was product judgment, not engineering expertise. And the biggest barrier for most people isn’t technical skill; it’s the mental block that says “I can’t build software.”

Then I built a workout tracker for the family. An iOS app that does exactly what we need. A few more iterations and our Strava subscription cancels. Not because Strava is bad. Because its value proposition was building what I couldn’t build myself, and that constraint no longer holds.

Then, in a professional context (that I can’t talk freely yet): enterprise-grade software, built in compressed timeframes that would have been a full quarter’s roadmap a year ago. Not a prototype. Not a toy. Production-ready, with feature upgrades beyond the existing solution.

Three different scales: personal, consumer, enterprise. The complexity is genuinely different. A personal tool answers to one user. Enterprise software answers to SLAs, compliance requirements, and thousands of concurrent users.

What you need around each is different: the trading app needed my judgment alone; the enterprise project needed a small team of domain experts. But the pattern is the same. In none of these was I acting as an engineer. I was acting as a domain expert with AI leverage, deciding what to build, evaluating whether it was good, and directing an AI to handle the implementation.

The interesting question isn’t that I could do this. It’s what it means for the way we organize teams, companies, and entire industries around building software.

This Isn’t Just Me

A reasonable objection: “Sure, you built some personal tools. But is AI-generated code actually production-grade?”

The data from the last twelve months says yes. Decisively.

The head of Claude Code at Anthropic hasn’t opened an IDE in months. He shipped 259 pull requests and 497 commits in a single month, all written entirely by AI. Anthropic reports that 70 to 90 percent of their code company-wide is now AI-generated. At OpenAI, nearly all engineers now use Codex, merging 70 percent more pull requests weekly.

Spotify’s co-CEO told investors on their February 2026 earnings call that the company’s best developers “have not written a single line of code since December.” Engineers use an internal system integrating Claude Code with Slack: direct a bug fix from your phone on the morning commute, receive a working build before you arrive at the office.

But here’s the distinction that matters: not all AI coding is equal. Early studies showed AI-assisted code introducing more bugs and higher churn. Those studies measured developers using basic code completion: autocomplete on steroids.

The real shift happened with agentic tools. Systems like Claude Code and Codex don’t just suggest the next line of code. They understand entire repositories, navigate across files, run tests, interpret failures, and iterate autonomously. The gap between prompting a model and directing an agent is the gap between dictating to a typist and collaborating with an engineer who works at machine speed.

SWE-bench, the industry standard for measuring AI’s ability to resolve real software engineering tasks, went from a 2 percent resolution rate in early 2024 to over 80 percent by late 2025. A 40x improvement in under two years. At the frontier companies, the shift has already happened. For the next tier, it’s happening now. The direction is not in question.

The scale shows up in the commit logs. Claude Code alone now accounts for 4 percent of all public GitHub commits, with SemiAnalysis projecting 20 percent by end of 2026. That only counts commits explicitly attributed to the tool, and it’s only one tool. Factor in unattributed usage and competitors like Codex, and the real share of AI-authored code is already considerably larger.

This matters because agentic AI changes what the human needs to do. You’re no longer writing code or even reviewing code line by line. You’re setting direction, evaluating output, managing context, and deciding when to reframe a problem the AI is stuck on.

Sitting back and asking the agent to do everything produces useless output packaged beautifully. The builder has to be an active orchestrator, learning and refining tactics with every feedback loop. That skill compounds.

This piece isn’t about pasting code from a chatbot. It’s about domain expertise and active orchestration producing production-grade software. That capability is real, it’s here, and it changes the economics of everything downstream.

Why the Roles Blurred

The separation of Product Management, Engineering, and Design into distinct functions was never a law of nature. It was an economic response to a constraint: building software was expensive.

To be clear: the underlying skills are real and valuable. Understanding users deeply is a craft. Visual and interaction design is a craft. Systems architecture is a craft. None of that goes away.

What was an artifact of cost was the organizational separation of these crafts into distinct roles connected by handoffs.

Product Management existed to reduce waste. When building the wrong thing cost six months of engineering time, someone needed to figure out what to build before the meter started running. The entire discipline of product discovery (user research, prioritization frameworks, roadmap negotiations) was a risk mitigation strategy against the high cost of building.

Design existed to get it right before implementation. When a redesign meant throwing away a full sprint of engineering work, you needed high-fidelity mockups and usability testing upfront. Iteration was too expensive to do in code.

Engineering existed as a distinct, specialized discipline because writing code was the scarce skill. Translating business requirements into working software required years of training and hard-won experience.

Each function represented genuine expertise. Together, they created an organizational structure optimized for a world where building was the bottleneck.

That world is gone for the companies paying attention. For the rest, it’s going. The timeline is the only question.

When building costs approach zero, when you can prototype in hours, iterate in minutes, and ship in days, the rationale for separating these functions into handoff-based pipelines dissolves. You don’t need a separate design phase when redesign costs nothing. The entire waterfall-in-disguise that most “agile” teams actually run becomes overhead without a purpose.

The spec doesn’t die, but it transforms. Writing is thinking, and the discipline of articulating what you’re building and why remains valuable. But the spec as a handoff document between PM and engineering loses its reason to exist. It becomes a living collaboration artifact between the builder and their AI tools: a place where agents surface gaps in your thinking, suggest directions you haven’t considered, and keep context updated as the product evolves.

The Abstraction Layer Shift

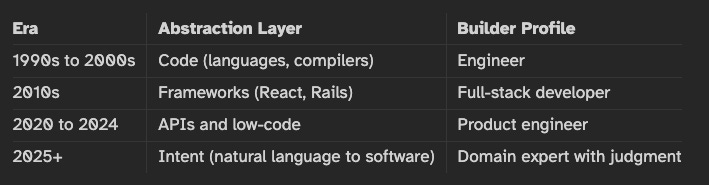

What happened is a steady climb in the layer of abstraction at which builders operate.

At each step, the barrier to entry dropped and the relevant skill shifted. When the abstraction layer was code, you needed an engineer. When it was frameworks, you needed a full-stack developer. When it was APIs, you needed a product engineer who could wire services together.

Now the abstraction layer is intent. The builder works in natural language. The AI handles implementation. The bottleneck is no longer “can you build it?” It’s “do you know what to build, and can you tell whether it’s good?”

The New Builder

The old hiring question was: “Can this person build it?”

The new hiring question is: “Does this person know what’s worth building?”

Think of it as a Venn diagram. On one side, the PM who develops technical comfort and leans into AI as a building tool. On the other, the engineer who builds genuine product sense and judgment about what’s worth creating. The new builder lives in the overlap.

The people who struggle are on the edges: PMs who only coordinated without developing product instinct, engineers who only wrote code without building judgment about what to build. Both roles, as traditionally defined, are under pressure. The intersection is where the leverage lives.

When code is commodity, five things define that intersection:

1. Discernment. Knowing what good looks like. And, harder, knowing what “good enough” looks like. The ability to evaluate AI-generated output and decide whether it meets the bar. This is the quality of your internal evaluation function, and it compounds with experience.

2. Judgment. What to build. What to kill. When to ship. When to stop iterating. These are product decisions, but they’re no longer the exclusive domain of product managers. Anyone building with AI is making these calls continuously, in real time, as part of the building process itself.

3. Domain depth. Understanding the problem space better than a model can infer from its training data. My wife’s trading rules aren’t in any dataset. The specific compliance requirements of a regulated industry aren’t captured in a general-purpose model. Domain expertise is the input that makes AI output actually useful.

4. Systems thinking. Not writing the code, but understanding how the pieces interact. Where the failure modes live. What breaks at 10x scale. What the second-order effects of a design decision are. This is engineering judgment without the engineering implementation. It becomes more valuable, not less, when AI handles the implementation.

5. Distribution instinct. When building is free, the only true waste is building something nobody uses. Distribution isn’t marketing. It’s the instinct to put something in front of real users fast, read their reaction, and iterate. The builder who ships to 5 real users on day one learns more than the builder who perfects in isolation for a month. Distribution is part of the building process now, not a phase that comes after.

Think of it like a film director. They don’t operate the camera. They don’t edit the footage frame by frame. But they know exactly what the shot needs to look like, why this scene matters to the story, and when to call cut. The quality of the film depends on their vision, not their technical execution.

The new builder is a director, not a camera operator. And like a director, they have checks and balances: build a feature in one session, then open a fresh session and ask it to review what was built as an independent consultant across architecture, coding standards, tests, and spec. Agents checking agents. More rigorous than most human code review, if you set it up deliberately.

But this raises an uncomfortable question. If a smaller subset of people with domain knowledge, discernment, and AI leverage can build production software in hours, what exactly is the 40-person product organization doing? Not all 40 are doing coordination work. But more of them than anyone wants to admit.

The Agile Industrial Complex

Here’s the honest answer: most of what a large product organization does is coordination.

Standups to sync blockers. Planning poker to estimate specialist effort. QA handoffs.

Backlog grooming sessions that are really prioritization theater. Status updates. The weekly sync about the other weekly sync.

These aren’t make-work. They’re genuine solutions to a genuine problem: when building requires many specialists working in concert, you need mechanisms to keep them aligned.

Not all rituals are equal, though. Retrospectives (learning from what happened) and architecture reviews (systems thinking across a complex product) remain valuable even in small teams. What becomes overhead is the coordination machinery: the rituals that exist to synchronize handoffs between specialists.

I call this the agile industrial complex. Not to be dismissive of the people who operate within it, but to name what it is: an organizational structure purpose-built for a constraint that is rapidly disappearing.

If one person can hold the full context of what needs to be built, the coordination cost drops to zero. No handoff from PM to engineering. No translation from design mockup to code. No standup to sync on who’s blocked. The entire overhead vanishes. Not because it was wasteful, but because the problem it solved no longer exists at the same scale.

What Dies, What Survives

What dies is the coordination layer. Scrum masters. Program managers. Release managers. Managers whose primary function is orchestrating handoffs between specialists.

An important distinction: managers whose value is orchestration go. Managers whose value is technical mentorship, architecture guidance, or strategic prioritization transform into the domain experts the new model needs. They don’t disappear; they become more leveraged.

What survives, and becomes more valuable, is domain expertise. This deserves more attention than the things that die, because this is where the opportunity lives.

When a product needs to scale, you still need someone who deeply understands operational reliability. When it operates in a regulated industry, you still need someone who knows compliance inside and out. Security, infrastructure, data governance: these require genuine expertise that AI can augment but not replace. These experts, wielding AI, become dramatically more productive than the teams they replace.

The critical distinction is this: you need the expert, not the team.

CapabilityOld ModelNew ModelBuilding features8-person squad (PM, 4 engineers, designer, QA, EM)1 to 2 domain experts with AI leverageScale and reliabilityOps team of 6Ops expert + automated infrastructureSecurity and complianceSecurity team + audit cyclesSecurity expert directing AI-powered toolingCoordinationManagers, scrum masters, program managersRadically lighter. Organic alignment replaces managed coordination.

This is not the argument that one person can do everything. At enterprise scale, you still need teams. But the difference is why the team exists. In the old model, the team existed to coordinate the act of building. In the new model, 3 to 5 domain experts collaborate because their domains intersect, not because the building process demands it. The team’s value is combined judgment, not coordinated labor.

The expert who knows what needs to be true in security, in compliance, in operational resilience becomes more leveraged than ever. The coordinator who existed to manage the handoffs between those experts and the engineers who built things? That’s the role the new model no longer requires.

Two Games, Two Moats

The AI era splits software into two fundamentally different games. The rules are different. The moats are different. Conflating them is a strategic error.

Game 1: Build for Myself

I built a trading application and a workout tracker with zero intention to monetize either. This category is growing fast. For friends-and-family scale, you just deploy and start using it. No distribution required. No go-to-market strategy. No fundraising.

The impact on SaaS is death by a thousand cuts. Every customer who builds their own tool is a cancelled subscription. Not a competitor. Something worse. A customer who simply doesn’t need you anymore. They didn’t switch to an alternative. They just... left.

The Strava example is instructive. What was Strava’s moat against me building my own tracker? Feature parity? I’ll match it in weeks. My data? It lives on my phone; they don’t own it. Their brand? Irrelevant to a user who can build exactly what they need.

What might survive is Strava’s social graph: the community, the leaderboards, the network effects. But the core product (tracking workouts, analyzing performance) is pure commodity now. The moat was never the features. It was the assumption that users couldn’t build those features themselves.

Game 2: Build for Others

If features are increasingly cloneable, what actually defends a company?

The answer depends on complexity. A simple workflow tool or dashboard can be replicated in days. A compliance platform with jurisdiction-specific logic across 50 countries takes longer. But even for complex B2B software, the timeline to functional parity has compressed dramatically. What used to take a competitor years now takes months. What took months takes weeks. Feature accumulation is no longer a durable advantage.

What remains is harder to copy and slower to build:

Depth of customer understanding. Knowing their problems before they can articulate them, because you’ve spent years immersed in their context.

Relationship capital. Earned trust, demonstrated reliability, and switching costs built on consistent delivery.

Proprietary data. Years of accumulated customer interactions, workflow patterns, and edge cases embedded in the product. A new entrant can clone your features but not your data. Feed that data into AI-powered systems and it becomes a compounding advantage that widens over time.

Helping customers win. The value isn’t your product; it’s what your product enables them to achieve. How effectively are you making your customers successful?

The moat is not your product. The moat is how deeply you understand what your customer needs to succeed, and how effectively you help them get there. This is a knowledge moat, not a technology moat. The moment “build it myself” becomes easier than “this vendor truly understands my needs,” the subscription cancels.

In Practice: The Incumbent’s Playbook

For established companies, the org chart is now the biggest liability. It was designed for a world where building was expensive and coordination was the primary challenge.

A caveat: none of this happens overnight. No innovation is embraced equally across an ecosystem. There’s a bell curve of adoption, and the agile industrial complex has institutional defenders: SAFe training programs, Scrum Alliance certifications, an entire consulting industry built on the current model. It won’t go quietly.

But the organizations that resist this shift won’t just fail to thrive. They’ll fail to survive. The pace of AI acceleration is breathtaking. What felt like a 5-year transition window in early 2025 looks more like 18 months from where we stand now.

The prudent path for large enterprises is the lab model: make a small bet, prove the new operating model with a single team, measure the results, and expand gradually. Not a company-wide reorg announced at an all-hands. A quiet experiment that generates undeniable evidence.

Here are four moves that matter.

1. Collapse functional silos into outcome teams.

This is the structural move that everything else depends on. Not a “squads” rebrand with the same handoffs under a new name. Actual teams of 3 to 5 people with end-to-end ownership of a customer outcome. No PM-to-Engineering-to-Design pipeline.

Each person is a domain-expert builder with AI leverage who can take something from concept to production. The team exists to learn together, not to coordinate the building process. Start with one team. Give them a real customer outcome to own. Measure what happens. That’s your lab model in action.

2. Weaponize your data advantage.

Your accumulated customer data is the moat that strengthens with AI rather than weakening. Feed it into fine-tuned models. Train systems that get smarter with every customer interaction. Make your institutional knowledge a compounding advantage, not a static archive.

3. Invest in distribution, divest from differentiation.

Features are commoditized. Accept it. The assets that matter now are brand, trust, sales relationships, compliance certifications, and enterprise procurement approvals. These are slow to build, hard to fake, and genuinely defensible. Double down on them instead of trying to out-feature a startup that can ship faster.

4. Accept a smaller, more leveraged organization.

Same revenue. Fewer people. More leverage per person. This is the hardest move because it’s the most human one. It means acknowledging that roles people have built careers around may no longer be necessary.

But here’s what the leaner organization enables: faster decisions, shorter feedback loops, domain experts who are closer to customers and closer to the product. The companies that get this right don’t just cut costs. They move faster, learn faster, and build things that fit their customers better than the bloated competitor ever could. The constraint was never the number of people. It was the coordination overhead those people required.

The Startup’s Dilemma

Startups face the inverse problem. Starting has never been easier. Surviving has never been harder.

EraHard PartEasy PartPre-AIBuilding the productFinding a market (if the product was good)Post-AIDefending the productBuilding the product

The opportunity is real. A 2-person team can go from idea to production in days, at near-zero cost. You can test 10 hypotheses in the time it used to take to test one. The cost of failure approaches zero, which means you can afford more swings at bat. For founders with domain expertise and AI leverage, this is the best time in history to start a company.

But everything that makes it easy for you makes it easy for everyone else. If you can build it in a weekend, so can 500 other people. Every niche is suddenly crowded. Every feature you ship can be cloned before your launch post finishes trending.

So the startup game has shifted. Building is no longer the hard part. The hard parts are learning and distributing.

Speed of learning, not building. The winning team isn’t the one that codes fastest. It’s the one that goes from “shipped” to “understood why it failed” to “shipped the fix” fastest. Learning loops, not build cycles, are the competitive advantage.

Integration depth. Shallow tools get replaced overnight. Deep embedding in customer workflows, the kind where ripping out your product would mean rearchitecting their process, creates switching costs that survive the AI era.

Distribution as a first-order capability. In a crowded niche, the product that wins isn’t necessarily the best one. It’s the one people find first and trust most. Community, brand, go-to-market: these matter more when every competitor can match your features in a week.

Stepping Back: The Human Question

Everything above is an operating model framework. It’s clean and strategic and makes sense on a whiteboard. But operating models are made of people.

A lot of those people are about to find that the skills they invested years in (coordination, process management, translating between specialists) are the skills this new model no longer needs. The problem is structural, not personal.

There’s a counterargument worth engaging with: if building gets cheaper, demand for software explodes, and we need more people, not fewer. The Jevons Paradox applied to code.

I think that’s directionally right and strategically misleading. The freed capacity absolutely does create net new innovation. Humanity will tackle problems in the next two years that were logistically impossible last year.

But the “more demand creates more jobs” framing misses a critical variable: individual agency. The demand is for domain experts who can wield AI, not for the coordination roles the old model required.

The transition isn’t automatic. It takes real effort, real time, and the willingness to step outside a comfort zone that the market rewarded for years. This could be zero-sum for specific people who don’t make the shift. That’s the honest part.

The honest guidance, not optimistic spin but honest:

Pick a domain. Go deep. Become the person who knows what needs to be true in a specific area: compliance, security, customer success, an industry vertical, a technical discipline. Then learn to wield AI as your leverage to make those things true.

The path isn’t starting over. Consider the program manager who spent 3 years coordinating between the compliance team and engineering. They already understand the compliance domain better than they realize. The shift is recognizing which domain knowledge you’ve already accumulated and deepening it deliberately, rather than maintaining the generalist coordination skills that the market valued five years ago.

The market right now is flooded with generalists and starved for domain experts who can wield AI. The people who hear this early and redirect their trajectory will find themselves disproportionately valuable. The window is open. It won’t stay open indefinitely.

The Moat Is Clarity

The transition is real and it’s moving fast. Some of it is painful. But here’s what makes it exciting: for the first time, the distance between understanding a problem and solving it has collapsed to nearly zero. A domain expert with AI leverage can build in days what used to take teams months. A 2-person startup can test ten hypotheses before a traditional company finishes its planning cycle. Problems that were too niche, too expensive, too logistically complex to tackle are suddenly within reach.

More software will be built in the next two years than in the previous twenty. Most of it will be built by people who would never have called themselves builders before.

The operating model for when building is free isn’t a theory. It’s already here. The people who see it clearly aren’t waiting for permission. They’re already building.